Is it impossible to build general artificial intelligence?

Review of Why Machines Will Never Rule the World: Artificial Intelligence without Fear, by Jobst Landgrebe & Barry Smith, 2025

Before we start this book review, consider these quotes about the possibility of nuclear power:

Albert Einstein, 1922

Translation by Henry L. Brose (Einstein the Searcher: His Work Explained from Dialogues with Einstein, 1922, p. 24):

At present there is not the slightest indication of when this energy will be obtainable, or whether it will be obtainable at all. For it would presuppose a disintegration of the atom effected at will—a shattering of the atom. And up to the present there is scarcely a sign that this will be possible. We observe atomic disintegration only where Nature herself presents it, as in the case of radium, the activity of which depends upon the continual explosive decomposition of its atom. Nevertheless, we can only establish the presence of this process, but cannot produce it; Science in its present state makes it appear almost impossible that we shall ever succeed in so doing.

Robert Milikan, 1928

From "Available Energy" (Messel Memorial Lecture, 4th September, 1928) [DOI: 10.1002/jctb.5000474013]:

The energy available to him through the disintegration of radioactive, or any other, atoms may perhaps be sufficient to keep the corner peanut and pop-corn man going on a few street corners in our larger towns for a long time yet to come, but that is all.

The energy available to him through the building up of the common elements out of the enormous quantities of hydrogen existing in the waters of the earth would be practically unlimited provided such atom-building processes could be made to take place on the earth. But the indications of the cosmic rays are that these atom-building processes can take place only under the conditions of temperature and pressure existing in interstellar space. Hence there is not even a remote likelihood that man can ever tap this source of energy at all. The hydrogen of the oceans is not likely ever to be converted by man into helium, oxygen, silicon, or iron.

Ernest Rutherford, 1933

1933-09-11 address at Leicester to the British Association for the Advancement of Science (quoted in New York Herald Tribune, "Atom-Powered World Absurd, Scientists Told: Lord Rutherford Scoffs at Theory of Harnessing Energy in Laboratories", 1933-09-12, through The Associated Press, reproduced in History of Physics, 1985, p. 117):

The energy produced by the breaking down of the atom is a very poor kind of thing. Any one who expects a source of power from the transformation of these atoms is talking moonshine. ... We hope in the next few years to get some idea of what these atoms are, how they are made and the way they are worked.

And yet they were profoundly wrong (nuclear power was demonstrated in 1951, and of course, the bomb-versions in 1945), even though they had quite technical arguments on their side. These kinds of spectacular prediction errors by famous experts led to the formulation of Arthur C. Clark's first law:

When a distinguished but elderly scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong.

The only way of discovering the limits of the possible is to venture a little way past them into the impossible.

Any sufficiently advanced technology is indistinguishable from magic.

This statement (1) is not to be over-interpreted. If some physicist tells you that perpetual motion machines are impossible, he will be right. The use of this first law is only relevant when you have senior scientists claiming something is impossible, and young scientists claiming it may be possible (that is my interpretation, I didn't read the book). In that context, it is wise not to trust the elders too much. As such, this is just a more fancy version of the general principle that old people tend to think many things are impossible until some younger person eventually proved them wrong (I asked Perplexity whether people used to claim climbing Mount Everest was impossible prior to it being done in 1953, but apparently not, and at least one prominent mountaineer claimed it was possible in 1885).

Anyway, so let's talk about the big question of our time: general artificial intelligence, usually just called GAI, or sometimes just AI. Is it possible? In some sense, we already have pretty functional AIs that are pretty general. You can talk to your AI friend or pseudo-romantic partner (current models pass the Turing test), look at AI-made art, listen to AI-made music, and of course, read lots and lots of AI-written content everywhere. AIs beat humans in essentially any game they are trained to do so in (chess, Starcraft 2, DOTA 2, Go etc.), as long as these games rely on classical game mechanics, and not about using human social skills. Many of these accomplishments were previously thought and declared impossible by many experts. So here we have a classic "line go up" argument in favor of AI being possible. Technological progress is rapid, and it is very bold to claim that we can't just keep adding more capabilities to the current direction of the technology. One would have to claim some kind of hard physical limit (e.g. Shockley–Queisser limit for solar power). Is there some kind of fundamental limitation for how far we can take neural networks in building intelligence? Two philosophers have taken on themselves to argue the affirmative in a provocative book:

Why Machines Will Never Rule the World: Artificial Intelligence without Fear, Jobst Landgrebe & Barry Smith, 2022 (2nd edition April 2025)

The authors are kind enough to provide a semi-formal version of their argument:

Our argument can be presented here in a somewhat simplified form as follows:

A1. To build an AGI we would need technology with an intelligence that is at least comparable to that of human beings (from the definition of AGI provided above).

A2. The only way to engineer such technology is to create a software emulation of the human neurocognitive system. (Alternative strategies designed to bring about an AGI without emulating human intelligence are considered and rejected in Section 3.3.3 and Chapter 13.)

However,

B1. To create a software emulation of the behaviour of a system, we would need to create a mathematical model of this system that enables prediction of the system's behaviour.1

B2. It is impossible to build mathematical models of this sort for complex systems. (This is shown in Sections 9.4–9.7.)

B3. The human neurocognitive system is a complex system (see Chapter 8).

B4. Therefore, we cannot create a software emulation of the human neurocognitive system.

From (A2) and (B4) it now follows that:

C. An AGI is impossible.

Now, I like a good contrarian book. I've read works by socialists/egalitarians denying various aspects of human evolution (e.g. Paige-Harden), various right-wing haters (e.g. Michael Ryan), trust-the-experts (e.g. Naomi Oreskes), principled anti-psychiatrists (posts by Bryan Caplan who is really just repeating Thomas Szasz) and feel-good anti-psychiatrists (e.g. Peter Kinderman), AI-doomerism inspired by genetics (The Revolutionary Phenotype, the author didn't like my review), so why not also anti-AI? Honestly, I don't know whether we can build GAI, but I don't think anyone else does either. We can try and see. So far trying has lead to amazing progress to the point that the entire economy is affected by AI-related growth. Maybe this is a fad (dot.com like bubble), or maybe not.

With respect to the authors, I don't find their book convincing. As a matter of fact, it had the opposite effect on my views. This somewhat paradoxical result can happen in certain Bayesian contexts. How can exposure to some evidence for X reduce your belief in X? If at a given time, you are not comprehensively familiar with the arguments for and against X, and you read some of the best arguments in favor (by some people) but find them unconvincing, this can be evidence against X because this has reduced your uncertainty that potentially more convincing arguments exist (which they still might). I think this book accomplished that for me. This is a technical book that seeks to prove (G)AI is impossible using mathematical rigor (the book is full of equations and complicated science summaries). One of the authors (Barry Smith) is an accomplished philosopher in the relevant field. It's certainly not a stupid book. Nevertheless, my problem is with their argument is (A2), which is maybe false.

Most of their book is about showing that we cannot really model many things in physics because the mathematical problems are intractable complex systems. They provide the amusing example of turbulence, something seemingly simple:

An interesting example of the use of scaling to describe complex systems is the theory of turbulence formulated by Kolmogorov (1941b, 1941a). This provides an explanation of one aspect of the behaviour of the phenomenon, though with massive limitations.

Turbulence is, according to Richard Feynman, ‘the most important unsolved problem of classical physics’ (Feynman, Leighton, and Sands 2010). This remains true today, and there is still no complete description of the phenomenon. Werner Heisenberg is said to have given the following reply when he was asked what questions he would ask God, if given the opportunity: ‘When I meet God,’ he replied, ‘I am going to ask him two questions: Why relativity? And why turbulence? I really believe he will have an answer for the first.’ (Marshak and Davis 2005, p. 76).

Turbulence is fluid motion characterised by chaotic changes in pressure and flow velocity. Figure 9.1 shows a famous sketch by Leonardo depicting the turbulence occurring when a water jet issues from an outfall into a pool. It is a ubiquitous phenomenon, ensuing for example when smoke rises from a cigar, in all waterflows, and in many complex systems: for example, the global climate, the weather, in respiratory systems of breathing animals, or in the circulatory systems of vertebrates such as ourselves. Air turbulence is one of the factors that allows the trumpeter to effectuate the oscillating motion of the lips needed to produce Aeolian tones. Indeed: ‘The whole mechanism of producing musical tones on brass instruments is a function of the transfer of laminar air to turbulent air to create pressure’ (Oare, June 17, 2018).

And yet, we can build planes that work fine even though they depend on acting correctly with turbulent forces. We have some approximations and we built wind tunnels, and the planes keep flying, so this works well enough. History of technology provides numerous examples of technologies that were invented before the science was remotely understood: metallurgy (used in pre-historical times without any real knowledge of elements, physics, or chemistry), Roman concrete (no real understanding of materials science), gunpowder (before anyone had any idea about elements [and no, Greeks didn't know about elements, Greek atoms are not the atoms of physics, since they are obviously divisible]), and of course, steam power was invented before thermodynamics. So why not AI too before deep neuroscience?

The most egregious example of their claims on this topic concern one of my favorite technologies, genetic engineering and embryo selection:

It is currently fashionable to state that ‘advances in biotechnology will allow a direct control of human genetics and neurobiology’ (Bostrom 2003b, p. 36),15 which can then be used to enhance the functioning of the human brain. Bostrom claims that pre-implantation diagnosis of complex traits in embryos could be used to select more intelligent individuals. He calculates that a selection of embryos can yield a logarithmic increase of up to 24.3 (sic) IQ points if 999 out of every 1000 embryos are discarded for one generation, and of 300 points if 90% of all embryos are discarded in each of ten successive generations (op. cit. p. 37). Bostrom also proposes to speed up his enhancement strategy by using stem-cell derived gametes (sperm and oocytes), which would enable the creation of thousands of embryos from one single couple within a matter of weeks.

Why is this sheer nonsense?

Firstly, we cannot genetically diagnose complex traits such as intelligence. It is not true that it requires only ‘(lots of) data on the genetic correlates of the traits of interest’ (op. cit. p. 37). This is because, as we saw in detail in Section 8.6.1, for complex traits such as intelligence the correlation between genetic code and phenotype is insufficient to obtain the sort of stochastic plausibility (p-value) required to drive an embryo-selection process; the correlation between the two is much too weak.16 Associations of hundreds of thousands of genetic loci contribute to such complex traits, which arise from interactions involving trillions of gene products at the cellular and inter-cellular levels. Because these interactions occur among gene products (primarily different types of RNA and proteins as well as the peptide, lipid, and other molecules they generate), and not at the level of the DNA, it is impossible that we will be able to infer them from the linear DNA-sequence. It is for this reason that such associations have not enabled any explanatory or causal modelling of mental traits (Tam et al. 2019), let alone enabled the sort of embryo selection proposed by Bostrom.

Second, even if an enhancement of intelligence through selection were possible, it is unscientific to claim an ability to estimate its effects in terms of increases in ‘IQ points’. An estimation of this sort would require the ability to identify the relative intelligence potential of embryos on the basis of differences manifesting variability along hundreds of thousands of dimensions, where we have not only no mechanism for measuring the differences involved but also no idea at all how we might integrate across such measures.

Third, there is no evidence that the IQ scale is open at the upper end. Everything that we know about evolutionary history tells us that the reach of human intelligence is with high likelihood structurally limited by neurophysiological features of the brain (Thorstad 2024).

Fourth, assuming that there will not be millions of couples ready and willing (and legally authorised) to provide their natural gametes for in vitro fertilisation and selection, the likelihood that there will be enough viable stem-cell derived gametes that can yield healthy individuals is close to zero. For to achieve this, we would have to complete the following steps:

bring about the differentiation of omnipotent stem cells to yield gamete-progenitor cells either by using (bio)pharmacological stimuli or by direct genetic alteration of the cells and

engineer the maturation of these progenitors to gametes in vitro, which means maturation from spermatogonia to sperm for the male line, and from oogonium to ovum for the female line. Unfortunately, however, we lack the natural environments (testis and ovary, respectively, with their respective cellular and signalling environment) for this maturation to occur, and thus we also lack the correct nuclear and cytosolic molecular configuration, which seems impossible to obtain outside the natural environment.

Again, therefore, the idea of producing many embryos for testing and selective deletion is not only immoral, but also impossible to achieve (certainly impossible to achieve in a way that would lead to an increased IQ in the resulting population).

The problem for them is that 1) nature already used embryo selection in the slow way (that is, produce multiple siblings and select some of them the hard way) to produce and enhance intelligence (human and other animals'), and nature is a blind process with no knowledge of anything, and 2) you don't need to understand how DNA produces intelligence or how the brain works in detail to tinker with it. Humans have been breeding numerous plants and animals to their liking for 10k years, with the result that we now have huge corn plants, fast-growing mega-chickens (you know the picture), massive-udder-supermilking cows and so on. Of course, you can say that this was done using the slow-method of looking at phenotypes and selecting among them (artificial selection), not direct embryo (genetic) selection. Yes, most of this was done this way, but direct genomic selection (genomic breeding, and various other names) have been used for 20+ years already with good results. It's not difficult to find reviews of the success of these methods.

This success is despite the fact that we have no particular model for how the complex system of corn or cow development happens. We don't need to, we just need to have a model that predicts the phenotype based on the genome, and then pick embryos based on that. It doesn't have to be perfect, just better than nothing. We already have such models for humans that predict intelligence with a correlation of 0.40, and height with 0.70 or so, and so on for many other phenotypes. So what they claim to be impossible is already reality. And we can't just claim that the phenotypes selected for so far have been purely physical (if one wanted to adopt a kind of dualism-stance, which the authors explicitly reject), since many animals are also breed for non-aggression, a psychological feature (since this costs money).

Similarly, their long paragraph about how difficult it is to work out how gametes combine into embryos and grow into adults as problems for gametogenesis technologies. We don't know how this works in detail, but we don't have to. Researchers have already made egg cells from stem cells in mice and produced viable offspring based on this method. This same kind of method can of course be replicated in humans, and several companies are working on this feat (with the financial backing of rich gays). I have no doubt they will succeed in the near future barring some ban on this technology. When they do, this will remove one of the blocking problems for the iterated embryo selection method (there are others, especially inbreeding and genetic variation issues as the embryos would have to mate with each other in the intermediate generations).

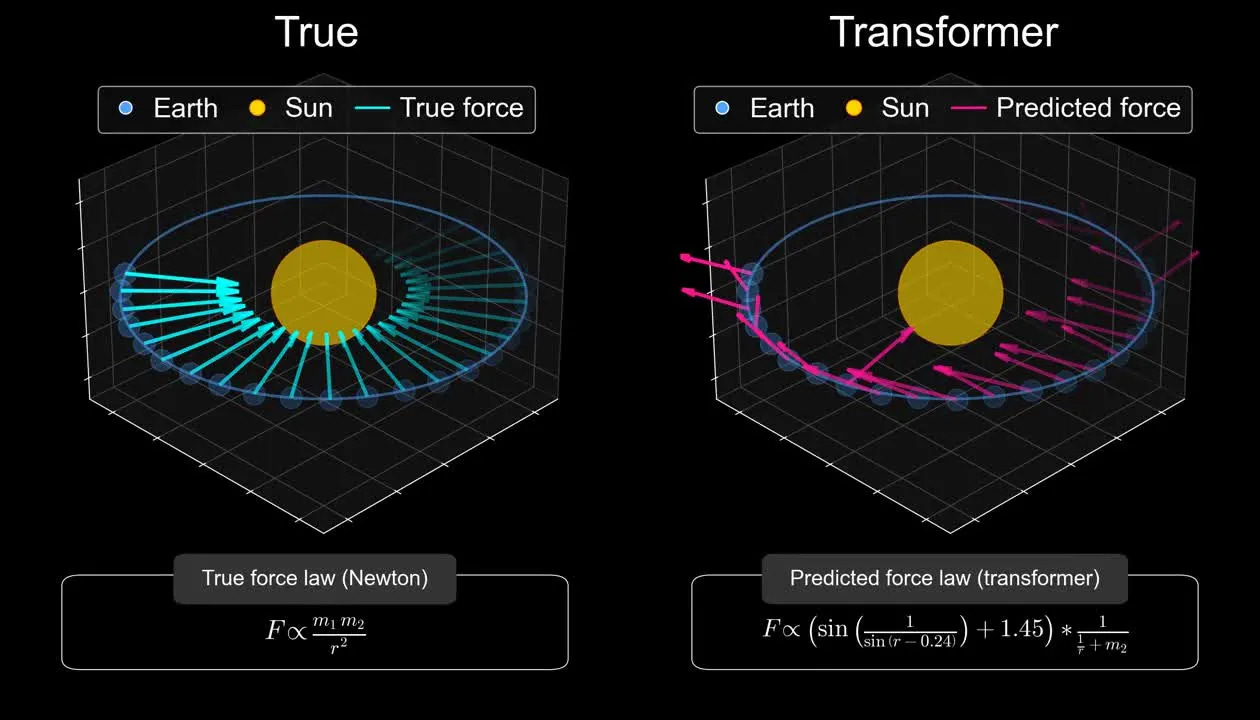

So how might we build (general) artificial intelligence? Currently, our best approach is a sophisticated autocomplete model piggybacking on human linguistic output. This AI is thus not built from scratch, but is basically a mimic of human intelligence. These AIs have gotten pretty far but routinely fail spectacularly when applied to data outside of their training distribution (the authors talk a lot about this). In other words, they lack ability to draw fundamental insights about root causes, and in general, they lack coherent world building and causal reasoning that humans possess. A recent example of this was an AI (that is, artificial neural network) trained to predict the orbits and physics of stars and planets. It did so very well, but not because it understood physics:

One of the key arguments by the authors is that real-life intelligence involves updating continuously to new data and adjusting models. There is a subset of mathematical models that can do this (called dynamic updating in time series forecasting science). These models are usually quite crude in the sense that all they do is re-estimate some parameters as new data comes in. This feat can also be accomplished with ANNs ('post-training'), but is similarly limited. Humans have a much more general ability to adapt to seemingly any new context. So far we have no idea how to make ANNs acquire this ability and that is a key limitation. This is also why it is relatively easy to come up with new intelligence test questions that current AI fail spectacularly at (the go-to resource for this is ARC). All you have to do is invent some new kind of pattern finding task or visual representation that the AI hasn't already seen 10,000 times. As such, a more suitable Turing test is whether a given AI performs at least at human-level on any test we can come up with, including ones freshly invented for the purpose of fooling it. If at some point we can no longer come up with such tests, then maybe we have gotten to the GAI stage. I don't see any particular reason why human-like general intelligence should be impossible to build in principle. After all, the blind process of evolution built it without knowing anything about how brains work.

The authors spend a lot of time explaining the detail of neurology in an effort to show how little we know, and how futile current efforts at uploading are. Granted, the current methods are far too crude, so they are right about that. However, they don't consider that instead of simulating all of physics from the bottom up (which we can't because it takes too much compute, our physics is incomplete and inconsistent, and involves impossible mathematical solutions), we could simulate a simplified physics from the bottom up and guide the evolution (basically, theistic evolution in a simulation). This is in fact similar to how many AIs are trained to become better than humans at numerous complex tasks such as driving cars really fast in video games. You should watch this video:

It is more relevant to the book than one might think. Even training AIs to drive Trackmania using modern reinforcement learning results in seeming randomness, and the cause appears to be the the simplified physics engine in the game is chaotically complex (tiny seemingly irrelevant differences in initial conditions result in massive changes downstream) and the ANN's are unable to anticipate these changes and adjust correctly (humans do much worse). Or so it appears anyway. The video also covers the numerous issues in training ANNs using reinforcement learning (the primary advantage of which is that it is a fast optimizer) since it uses proxy endpoints that are hard or maybe sometimes impossible to set up correctly (i.e., misalignment). Evolution did not have this problem because it only used a hard endpoint (successful reproduction and further survival of offspring.)

Watching this video also reminded me of another source of problems with AIs compared to human intelligence: they are terribly slow learners. Human children can accurately classify animals and objects even after a single exposure, whereas many AIs still are easily tricked with trivial changes (in human eyes) even after seeing millions of examples. ANNs do not generalize in the same way that humans do. There is something wrong with the architecture of ANNs as they exist now. It is not difficult to convince yourself of this if you read about actual biological NNs, which are exceedingly complicated machines involving 1,000s of different entities (cell types, synapses, transmitters, hormones and so on) working together in a complicated way that is beyond human capabilities to understand. Nevertheless, there is no proof here or anywhere that improvements cannot be found here, or that such improvements cannot get us far enough. No one says that to build intelligence one has to emulate the solution evolution found. One may be able to find some other, simpler ways to accomplish the same thing. As a matter of fact, there are lots of obvious inefficiencies in biological systems. The favorite example of evolution enjoyers is the Vagus nerve. It's a nerve in many animals that connect two points close to each other in the throat area (larynx), however, it makes a detour down towards the heart to do a U-turn. As such, in a giraffe, it is 5 meters long instead of a few centimeters. No intelligent designer would make such a mistake.

Since we don't really understand how to design intelligent systems, and our best approach involves building a system that can mimic human efforts at various tasks (auto-completion of texts, descriptions of images), perhaps a more fundamental solution is to design a game of life and replicate evolution in a simpler physics system (like that of Trackmania or Minecraft, inspired by Game of Life). This is akin to evolutionary algorithms, though the starting blocks would not be a pre-specified neural network architecture, but rather whatever the minimum life form in that physics universe would look like, something as close to The last universal common ancestor (LUCA), but simplified enough so that we can run time in the universe on very-fast-forward mode.

Anyway, I recommend the book for those interested in the possibility of superhuman artificial intelligence, and the worries related to that.

The example of turbulence is apt. Kolmogorov’s theory and subsequent developments along those lines are the most we can probably expect. As noted in the post, useful results and devices (aircraft) can be designed with the current understanding. Computational fluid dynamics is somewhat helpful but most of the predictive value in the physics of fluids comes from semi-empirical and heuristic methods like dimensional analysis.

The trouble with turbulent fluids is not complexity as much as it is chaos. As Edward Lorenz showed back in the 1960s, extremely simple systems exhibit deterministic chaos. I suspect chaos plays a role in the brain, which means it does not lend itself to closed-form, analytic solutions. So what? Airplanes still fly and a human-designed GAI can be created. Take it from an older, albeit not distinguished, physicist.

It may be possible to build GAI. I suspect (not having read the book) that it is possible. That doesn't mean that current methods (i.e. LLMs and their close relatives) are the way to do it.

In fact from my understandng of how LLMs work I think that they are dead ends like the various analog computers developed in the early/mid twentieth century.