Steam game ratings don't show recency effect

A study of 1000 computer games and their rating histories

Due to a data error, some of these findings are not entirely true. See next post.

Previously, I showed that IMDB movie ratings are inflated for recent movies. I speculated this was due to fanbois going to the cinema to watch them first, and later when average Joe and Jane watch them at home, they don’t think the movies are that great. Or maybe it’s because people rate things more highly when they went out of their way to pay to see them in the cinema. A less likely mechanism is that studios employ an army of bots to upvote their recent releases. Maybe you have another hypothesis which you can note in the comments.

I decided to check if recency bias exists in Steam game ratings. Steam has an API that helpfully lets you query every single review for a game in chronological order (text and yes/no vote). So after giving this task to Claude and waiting a few hours, I now have a dataset of ratings for 1000 games. I chose to study the top 500 games from SteamDB’s top list. It uses a pseudo-Bayesian (homemade) shrinkage method to avoid filling the top with games with a 100% rating based on 5 reviews. Then I sampled 500 games at random to get a representative sample. One thing is that I limited the scraping to the first 50k votes for a given game to save time (some games have millions). Looks like this:

Mean rating as a function of review count:

Looking at the representative games (sampled at random), there is a weak positive relationship between the number of ratings and the mean rating (Spearman r = 0.12), whereas for the top games it is negative (r = -0.67!). The range restriction and ceiling issues for the top games make the correlation difficult to interpret, but presumably the main reason is just regression towards the mean. We could also speculate that as games expand their player base, they expand into people who are less likely to be exactly enthusiastic about this particular genre of game.

Anyway, more interesting is looking at a game’s rating over time. Are they overrated when they come out and then decline? I can think of 3 metrics for studying this: 1) age of review, 2) review count, 3) proportional review count. We can try each of them. First, age:

Note the sample is limited to games with at least 5 years of data (so released in 2021 or before). This is because otherwise some games would contribute data to e.g. year 2, but nothing to year 5, and so the games used to estimate each point in the lines would change with age, and thus may cause spurious effects (a kind of selection bias). The lines are essentially flat, noise aside. Review count:

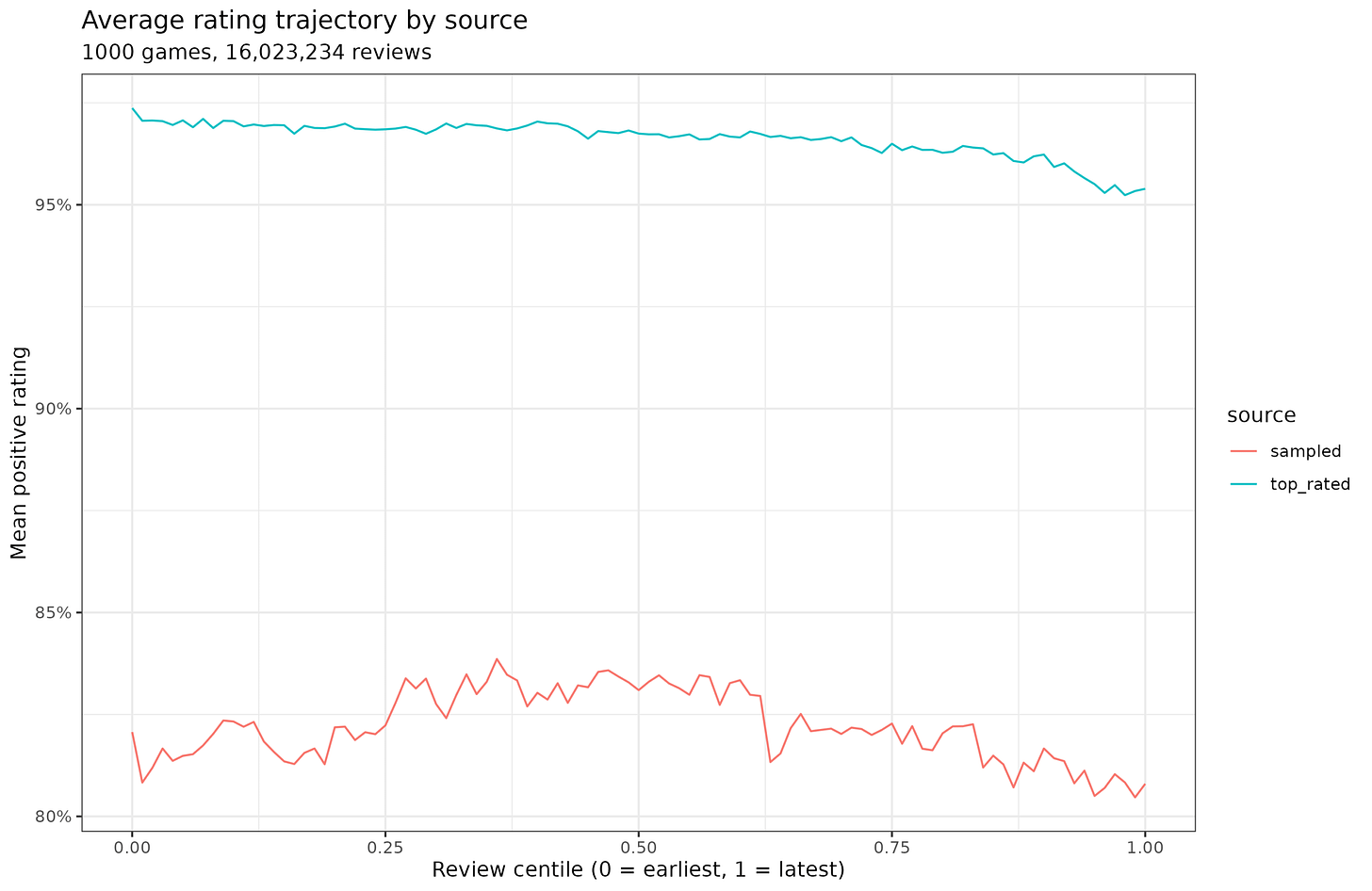

The games are limited to those with at least 10k reviews. There’s a slight positive slope for the representative games, and nothing much for the top ranked. Proportional counts:

When examined this way, we don’t need to exclude games, since every game has their first and last reviews, whether they have 100 or 10M reviews. There’s a bit of a negative slope for the top ranked ones, and nothing much for the representative games.

The more statistically sound way to model this is using fixed effects models. These show:

The fixed effects models have an intercept (mean) for each game, so only the within-game variation is used for estimation. Each game then has its slope estimated, and these are averaged at the end. Doing this, we see that among the top games, there is some decline, and it doesn’t really matter whether we model this using age, count, or relative counts (r2’s are ~2%), whereas for the representative games we find nothing. Thus, maybe top games have some slight decline, but it’s very minor. If we use the age model, the slope is -0.002, meaning that for a new top game, it would be rated about 5 * 0.002 = 1%point lower after 5 years vs. at release.

I didn’t really find much else of interest in the data. So recency bias definitely works differently for movies rated on IMDB vs. games released on Steam. That’s psychologically interesting. If it was just a fanbois buy a game first and rate them higher, we would expect to see this also for the Steam games, but we don’t. So I don’t know why this is.

There is something deeply noble about doing a load of honest work, finding nothing out and posting it anyway.

It would be interesting to see if ratings change much at all between games being in early-access vs full release. Assuming that the steam API surfaces that status